If you don’t have a Customer Data Integration solution in your business, then you’re missing out. Find out why.

About

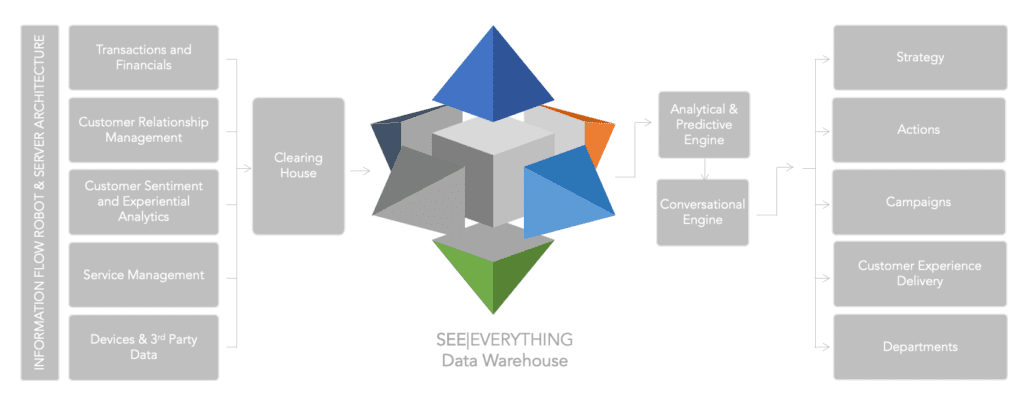

Customer Data Integration (CDI) is the process of consolidating and then properly managing information about your customers from all available business sources, including all known contact details, financial data associated with that client, and information gathered through marketing activities.

Encanvas Customer Data Platform (CDP) incorporates advanced tooling for Customer Data Integration (CDI) as standard.

The problem Customer Data Integration solves

Most businesses want to maximize their customer experience by leveraging data. To achieve that requires the formation of an aggregated data-mart to present a single version of the truth. Preventing this ambition is the incumbent approach to data management, forged over the years as departments have invested in software to support discrete processes. Companies will use a blend of software applications and third-party tools along with their core enterprise resource planning system to fulfil their processes. This results in a fragmented and unregulated environment for data.

As businesses face an ever more regulated operating environment and greater competition in their local markets owing to the impact of Internet and mobile phone use, executives can ill afford to ignore the importance of installing appropriate controls over how customer and financial data is used, who uses it and how they use it.

Creating a complete single-version-of-the-truth

An important business driver for integration services comes from the desire of executives to harvest data from across their enterprise in order to make informed decisions. The drive towards a data-driven culture demands that systems connect to one another. The integrity of data executives review, is a major sticking point.

A survey of 442 business executives around the world by Harvard Business Review found that corporate decision makers have major concerns about access to, availability of, and the quality of internal and outside data. The result is reduced confidence in their decision-making ability.

Moreover, nearly half of the global respondents said their lack of confidence stems from a lack of information or easy access to data. The findings are puzzling given the emergence of big data techniques, the proliferation of global networks and the sheer processing power contained even in mobile devices.

One reason for the disconnect between big data and decision making, the Harvard researchers found, is that “silos of data, typically imprisoned in customer, financial, or production systems, are frequently inaccessible by individuals outside the functional group.”

In this regard:

- 43% of survey respondents said important external or internal data was missing

- 42% said data was inaccurate or obsolete, and;

- 33% said they “couldn’t process information fast enough.

Technology building blocks

There are a series of discrete activities that need to be performed in order to bring customer data together from where it exists:

- Data connectors and Application Programming Interfaces (APIs) – These are software intermediaries that allows two applications to talk to each other. In the case of a customer data integration, it’s likely that data with be harvested by four or more systems. This demands that the customer data platform being used is capable of integrating with a diverse range of data sources, file types, protocols and systems.

- Voting systems – Whilst there may be more than one source for any data asset, not all data is of a common consistency. Voting systems are employed by customer data integration platforms as a mechanism to recommend the best source of data when more than one source exists.

- Data validation and protection again malware – To ensure any data being uploaded is free from malware and possesses a reasonable level of data integrity, it’s important that Data Integration systems and processes validate files ‘at source’ before data goes anywhere. To do this files need to be interrogated by running through some validation scripts before they are processed. Any failed uploads should then be monitored and reported by the data integration systems tooling.

- Data cleansing, normalization, deduping and quarantining – It’s highly unlikely that any sourced data will be of a high quality. To improve the quality of data, a series of data cleansing routines can be enacted on data files. These will commonly include:

- Cleaning – Removing gaps, spaces, spurious characters

- Normalizing – Enacting a series of data transformation routines to standardize the format of common fields such as email and phone numbers etc.

- De-duping – Where more than one record exists of the same customer from the same system due to duplicate records, then systems may be required to determine the latest record and delete older records, or merge them.

- Quarantining – Similarly, it may be necessary to set-aside (‘quarantine’) records that are of poor quality and require manual review.

- Data upload – As the term suggests, data uploading is about taking a transformed file and uploading it to the data mart. This can require mapping and concatenation of fields to mirror the data architecture of the data mart. Uploads have to be recorded and it must be possible to rollback data uploads for administrative purposes.

- Data extraction and software robots – Sometimes it’s not possible to directly connect to a live data source. There may be technical challenges in doing so, but also there may be load-balancing issues and business continuity and security concerns. In some cases, we have seen examples where companies are unable to gain authority from IT service providers to source the data they own from computing systems they don’t. Where direct connection

Considerations

The currency of data

Not all data is accurate and complete all of the time. Take accounting information for example. Sometimes, invoicing lags behind sales. Taking a read of data at the wrong time in the accounting calendar can return unhelpful and potentially destructive information! It’s very important therefore, that architects of any data harvesting project possess a deep appreciation of the business they are serving.

Support for multi-threaded, multi-sourcing and linking

Data integration is difficult to get right and has many technical challenges. One of them is systems latency. Sometimes, unless sessions are maintained, connections to third-party systems can drop.

- Multi-threading is in essence about the receiving system giving sourcing systems a ‘nudge’ every now and then to remind them that they’re still needed.

- Multi-sourcing happens when a system harvests data from more than one system concurrently.

- Multi-linking happens when specific rows of table data are plucked from the databases of sourcing systems. All three of these technologies are needed in Customer Data Integration projects.

About Encanvas

Encanvas is an enterprise software company that specializes in helping businesses to create above and beyond customer experiences.

From Low Code to Codeless

Better than code-lite and low-code, we created the first no code (codeless) enteprise application platform to release creative minds from the torture of having to code or script applications.

Use Encanvas in your software development lifecycle to remove the barrier between IT and the business. Coding and scripting is the biggest reason why software development has been traditionally unpredictable, costly and unable to produce best-fit software results. Encanvas uniquely automates coding and scripting. Our live wireframing approach means that business analysts can create the apps you need in workshops, working across the desk with users and stakeholders.

When it comes to creating apps to create a data culture and orchestrate your business model, there’s no simpler way to instal and operate your enterprise software platform than AppFabric. Every application you create on AppFabric adds yet more data to your single-version-of-the-truth data insights. That’s because, we’ve designed AppFabric to create awesome enterprise apps that use a common data management substrate, so you can architect and implement an enterprise master data management plan.

Encanvas supplies a private-cloud Customer Data Platform that equips businesses with the means to harvest their customer and commercial data from all sources, cleanse and organize it, and provide tooling to leverage its fullest value in a secure, regulated way. We provide a retrofittable solution that bridges across existing data repositories and cleanses and organizes data to present a useful data source. Then it goes on to make data available 24×7 in a regulated way to authorized internal stakeholders and third parties to ensure adherence to data protection and FCA regulatory standards.

Encanvas Secure and Live (‘Secure&Live’) is a High-Productivity application Platform-as-a-Service. It’s an enterprise applications software platform that equips businesses with the tools they need to design, deploy applications at low cost. It achieves this by removing coding and scripting tasks and the overheads of programming applications. Unlike its rivals, Encanvas Secure&Live is completely codeless (not just Low-Code), so it removes the barriers between IT and the business. Today, you just need to know that it’s the fastest (and safest) way to design, deploy and operate enterprise applications.

Learn more by visiting www.encanvas.com.

The Author

Ian Tomlin is a management consultant and strategist specializing in helping organizational leadership teams to grow by telling their story, designing and orchestrating their business models, and making conversation with customers and communities. He serves on the management team of Encanvas and works as a virtual CMO and board adviser for tech companies in Europe, America, and Canada. He can be contacted via his LinkedIn profile or follow him on Twitter.

Further reading

CIO article on Customer Data Integration

Blog article on CDI

Wikipedia article on data integration

Now read:

- Unleashing the New Workforce: How Clustered Cloud Can Supercharge Your Team

- Forget Public Cloud: This Private Cloud Innovation is Game-Changing

- Why Clustered Private Clouds Trump Public Cloud Solutions

- Data Fabric: What Is It and Why Every Business Needs One

- Decoupling Data Benefits for Digital Transformation